News Story

KMi’s Stefan Rueger presents Dr Ian Witten for honorary doctorate

Tuesday 3 Oct 2017

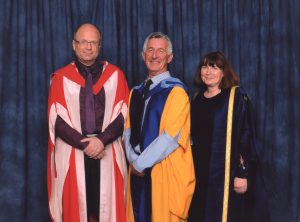

Prof Stefan Rueger of KMi presented Dr Ian Witten of Waikato University to Executive Dean Mary Kellett at Friday’s Degree Ceremony. Ian received a honorary doctorate "Doctor of the University" from the OU for services to the educationally underprivileged.

Ian Witten is a Computer Scientist who has contributed to the research in his discipline in different areas: throughout his career he researched compression algorithms, Information Retrieval, Machine Learning and Digital Libraries. It is a hallmark of his lab that their research is almost always accompanied by software implementations and textbooks to reach a much wider audience than the immediate research community: For example, the textbook ‘Managing Gigabytes’ has accompanied his open source text search engine MG. A highly acclaimed data mining book (translated into many languages, including Korean) has accompanied their open-source Weka toolkit, which has been adopted by data mining researchers all over the world.

However, Ian Witten’s main influence beyond academia is via his outstanding service to the educationally underprivileged through the Greenstone Digital Library Suite http://www.greenstone.org/ that was developed under his direction. Greenstone is an open source platform for publishing books and documents on the intranet or through CD-ROMS on a local unconnected machine. This has enabled thousands of communities, non-government organisations etc. to distribute complex and rich material on a very wide range of low-cost hardware. Greenstone has become a high-profile digital library system that is widely used in less-developed countries, effectively becoming the standard for distributing digital libraries. Co-sponsored by UNESCO, Greenstone has user interfaces in 50+ languages, full documentation in 5 languages, and a large and avid user community providing support.

KMi has a special relationship with both the Greenstone digital library and the team which developed it: David Bainbridge, who succeeded Ian Witten as director of the project, is an affiliate of KMi, and has been a visitor to KMi in the past, collaborating with Stefan Rueger on retrieval methods for multimedia that have found its way into the Greenstone Digital Library. Stefan and other team members in KMi visited the Greenstone team on numerous occasions over last decade each time researching and co-developing new modules and new document access mechanisms. This collaboration was partially funded by two EPSRC grants and by grants held at the University of Waikato.

Latest News

KMi research informs parliamentary debate on AI, labour, and gender inequality

KMi is addressing technology‑facilitated gender‑based violence

From lab to standard: KMi research behind the new W3C Data Façades community group

Open University Researchers awarded £450,000 for “Unlearning AI”

Breadth vs Depth in an AI world: Rethinking graduate skills for 2036